Defending Against Watering Hole Attacks: A Deep Dive into Supply Chain Compromise Detection Using Behavioral EDR

Overview

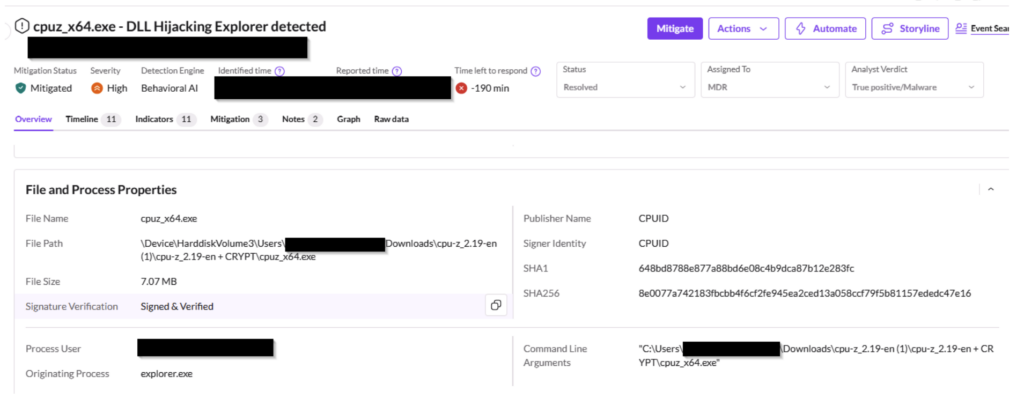

Software supply chain attacks are on the rise, exploiting the trust we place in legitimate vendors and their distribution channels. The CPU-Z watering hole attack of April 2026 serves as a stark reminder: even a trusted utility like CPU-Z can be weaponized. In this incident, threat actors compromised the CPUID domain at the API level, silently redirecting download requests to attacker-controlled infrastructure for approximately 19 hours. Users who navigated directly to the official site received a properly signed, legitimate-looking binary—but with a malicious payload bundled inside. The SentinelOne agent detected the anomaly within seconds, not by relying on signatures, but by observing what the process did after execution. This tutorial will walk you through the behavioral indicators that flagged the attack and how you can apply similar detection principles in your environment.

Prerequisites

Before diving in, ensure you have a working understanding of:

- Endpoint detection and response (EDR) concepts

- Basic process execution and memory management in Windows

- Common threat actor techniques (process injection, shellcode)

- Access to an EDR that supports behavioral analysis (optional but recommended)

Step-by-Step Guide: Analyzing a Supply Chain Attack with Behavioral Indicators

This guide uses the CPU-Z incident as a case study. Each step corresponds to a behavioral signal the SentinelOne agent observed. You can follow along by reviewing similar suspicious process chains on your own endpoints.

Step 1: Recognize Anomalous Process Chains

The attack began when cpuz_x64.exe executed. Though the binary had a valid digital signature and was downloaded from the vendor’s own infrastructure, its subsequent behavior was abnormal. cpuz_x64.exe spawned PowerShell.exe, which then spawned csc.exe (the C# compiler), which in turn spawned cvtres.exe (a resource compiler). Legitimate CPU-Z never does this. Look for parent-child relationships that deviate from the norm—especially when trusted processes suddenly launch scripting engines or compilers.

Step 2: Detect Anomalous API Resolution

The malicious payload bypassed the OS loader by locating system functions through non-standard discovery methods. For example, it may have used GetProcAddress with obfuscated strings or direct syscalls. In your EDR, monitor for processes that call API functions via indirect addressing or manual resolution of DLL exports. This is a classic signal of packed or shellcode-based payloads.

Step 3: Identify Reflective Code Loading

No file on disk corresponded to the executable code running in memory. The payload was loaded reflectively—a technique often used to circumvent file-based scanning. Check for memory regions that contain executable code without a matching image name or mapped file. Tools like Volatility or commercial EDR can flag these as MEM_IMAGE without a corresponding file.

Step 4: Spot Suspicious Memory Allocations

The agent saw Read-Write-Execute (RWX) memory permissions being requested. This is a staging pattern for malicious payloads. While some legitimate software may allocate RWX memory (e.g., JIT compilers), it’s unusual for a system utility like CPU-Z. In your detection rules, alert on any process that requests RWX memory and then executes code from that region—especially if the process is not a known runtime.

Step 5: Pinpoint Process Injection Patterns

The execution flow indicated code being redirected into a secondary process to mask its origin. Specifically, the csc.exe and cvtres.exe instances were likely injected with shellcode. Monitor for open process operations (e.g., OpenProcess, WriteProcessMemory, CreateRemoteThread) that occur in rapid succession from a process that shouldn’t be performing such actions.

Step 6: Recognize Heuristic Shellcode Signatures

Sequential operations characteristic of automated exploitation toolkits—such as calling Sleep with a delay, followed by VirtualAlloc, then copying data—triggered a heuristic alert: “Penetration framework or shellcode was detected.” These patterns are often learned by EDR models. In practice, you can simulate them using atomic red team tests like those from the Atomic Red Team library to tune your detection.

Common Mistakes

Mistake 1: Trusting Digital Signatures Alone

The CPU-Z binary was properly signed. Attackers compromised the vendor’s infrastructure, so the signature was valid. Relying solely on signature verification as a trust indicator is insufficient. Always combine with behavioral analysis.

Mistake 2: Ignoring Process Behavior

Many organizations monitor for known malware files but ignore what a process does after execution. CPU-Z normally doesn’t compile C# code. A simple rule like “csrss.exe launching cmd.exe” is a common indicator, but here the chain involved trusted executables in unusual roles. Build rules that model expected process trees for each application.

Mistake 3: Overlooking the Supply Chain

The attack originated from the vendor’s own download button. Similar incidents like the GhostAction campaign (compromised GitHub maintainer) and NPM maintainer phishing show that the trust chain can be broken above the user. Always validate third-party updates by checking checksums from independent sources, and consider running them in isolated environments first.

Summary

Behavioral detection is the key to stopping supply chain attacks like the CPU-Z watering hole. By watching what processes do, not just what they are, you can catch malicious activity even when the binary is signed and delivered from a trusted source. Implement the six indicators—anomalous process chains, API resolution, reflective loading, RWX memory, injection patterns, and heuristic shellcode—and combine them into detection rules. Remember: your EDR should be capable of autonomous response, just as SentinelOne’s agent terminated and quarantined the involved processes before the attack could progress. Stay vigilant, and never take a vendor’s infrastructure for granted.

Related Articles

- Week 19 Cybersecurity Highlights: Court Victories and a New Worm Threat

- Enhance Your Linux VR Experience with WayVR: A Step-by-Step Setup Guide

- Massive Router Hijack Campaign Linked to Russian GRU Threatens Global Cybersecurity

- Exposure Validation Automation: Staying Ahead of AI-Powered Cyber Attacks

- Canvas Outage During Finals: Cyberattack Disrupts Thousands of Schools

- SentinelOne AI EDR Thwarts Sophisticated CPU-Z Supply Chain Attack in Real-Time

- Unlocking MSP Cybersecurity Revenue: Overcoming the Top Sales Hurdles

- Rise of SaaS-Focused Cyber Extortion: Vishing and SSO Attacks by Cordial and Snarky Spiders