Securing Autonomous AI Agents in CI/CD: GitHub's Defense-in-Depth Strategy

Introduction

As software development pipelines increasingly integrate autonomous AI agents to automate tasks like code generation, testing, and deployment, the security challenges multiply. GitHub has detailed a comprehensive, defense-in-depth security architecture specifically designed for agentic workflows within CI/CD systems. This approach focuses on three pillars: isolation, constrained execution, and auditability. By sandboxing environments, enforcing restricted permissions, and providing full execution traceability, GitHub aims to mitigate risks from prompt injection, privilege escalation, and unintended actions. This article explores GitHub's strategy and how it redefines safe AI integration in modern CI/CD.

The Rise of Agentic Workflows

Agentic workflows refer to automated processes where AI agents—autonomous software components—perform actions like code review, patch generation, or dependency updates. Unlike traditional scripts or rule-based automations, these agents make context-aware decisions using large language models (LLMs) and other machine learning models. Their autonomy brings efficiency but also new attack surfaces. For instance, a compromised agent could be tricked via prompt injection into executing malicious commands, escalating privileges, or leaking sensitive data. GitHub's architecture is a response to the urgent need for secure agentic operations.

Security Challenges in Agentic CI/CD

Agentic systems introduce unique threats that conventional CI/CD security measures are not designed to handle. Key risks include:

- Prompt injection: Malicious users craft inputs that cause the AI agent to behave outside its intended scope.

- Privilege escalation: Agents with excessive permissions may access or modify protected resources.

- Unintended actions: Autonomous decision-making can lead to erroneous or harmful operations, such as deleting production databases or deploying untested code.

- Data leakage: Agents might inadvertently expose internal code, credentials, or proprietary information.

To address these, GitHub's architecture does not rely on a single security measure but layers multiple controls.

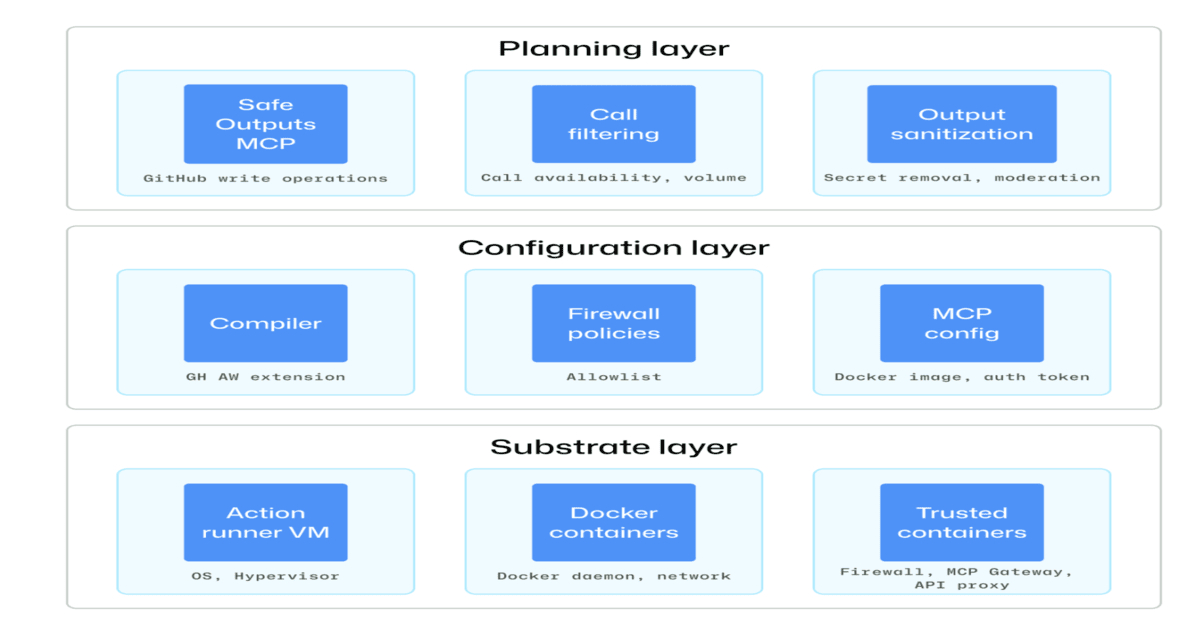

Defense-in-Depth Architecture Overview

GitHub's approach is modeled after zero-trust principles: assume that every agent action could be malicious or erroneous. The architecture comprises three core mechanisms:

- Isolation – Running agents in sandboxed environments that limit their reach.

- Constrained execution – Restricting permissions and capabilities to the minimum necessary.

- Auditability – Logging all actions for forensic analysis and compliance.

These work together to create a safe perimeter for autonomous operations.

Isolation and Sandboxing

Agents are executed in ephemeral, isolated sandboxes—containerized environments with no persistent network connections to internal systems. Each sandbox is spawned per agent instance, meaning even if one agent is compromised, the attacker cannot pivot to other parts of the pipeline. The sandbox restricts file system access, network calls, and system calls to a predefined whitelist. For example, an agent assigned to update dependencies can only read the repository’s package.json and write to a temporary patch file; it cannot access SSH keys or cloud API tokens.

Moreover, the sandbox is destroyed after the task completes, preventing any residual state from leaking. This ephemeral design also ensures that every agentic action starts from a clean, known-good environment.

Constrained Execution with the Principle of Least Privilege

GitHub enforces granular permission models for agents. Unlike a human developer who may have broad access, an AI agent receives a token with scoped permissions—for instance, only contents: read on a specific repository and pull_requests: write for creating PRs. These permissions are further time-limited, expiring after a short TTL (time-to-live).

/presentations/game-vr-flat-screens/en/smallimage/thumbnail-1775637585504.jpg)

In addition, runtime constraints are applied: agents cannot execute arbitrary shell commands unless explicitly allowed. Instead, they interact with a restricted API that exposes only safe operations (e.g., git commit via a validated wrapper). This minimizes the blast radius if the agent is misused.

Auditability and Full Execution Traceability

Every action taken by an agent is recorded in an immutable audit log. This includes the input received, the agent’s reasoning (if available), the command executed, and the output. GitHub provides a dashboard where security teams can review agent activities in real time or retrospectively.

Traceability helps in incident response—if a rogue action is detected, teams can pinpoint the exact agent, the prompt that led to it, and the sandbox configuration. This also supports compliance requirements for regulated industries that must prove their pipeline security.

Implementation Best Practices

For organizations adopting agentic workflows, GitHub recommends several practices beyond its architecture:

- Prompt sanitization: Strip sensitive data from inputs before they reach the agent and validate prompt boundaries.

- Human-in-the-loop for high-risk actions: Require manual approval before an agent can merge code or deploy to production.

- Regular rotation of agent credentials: Use short-lived tokens and rotate them frequently.

- Continuous monitoring: Set up alerts for anomalous agent behavior, such as unexpected network calls or permission escalations.

GitHub’s defense-in-depth is not a replacement for these practices but an enabler for safer automation.

Conclusion

GitHub’s security architecture for agentic workflows marks a significant step forward in safely integrating AI into CI/CD pipelines. By combining isolation, constrained execution, and auditability, the platform addresses the unique risks of autonomy—prompt injection, privilege escalation, and unintended actions. As AI agents become more prevalent, such layered defenses will be essential for maintaining trust and security in software delivery. Developers, security engineers, and IT leaders should consider these principles when designing their own agentic systems.

Related Articles

- Inside Dyson's Latest Robot Vacuum: A Partnership Over Proprietary Motors

- Unlocking the World of DIY Peripherals: Custom Input Devices You Can Build

- From Roomba to Robo-Pet: Colin Angle's New AI Companion for Seniors

- 7 Breakthroughs in Enterprise AI: How NVIDIA and ServiceNow Are Redefining Autonomous Agents

- How DAIMON Robotics Is Giving Robot Hands a Sense of Touch: An Expert Q&A

- Unlocking the Hidden Potentials of Your Samsung TV: A Step-by-Step Guide to the Secret Service Menu

- Q4 2025 ICS Threat Report: Phishing Worms Surge Amid Declining Infection Rates

- Unlock Your Samsung TV’s Hidden Service Menu: 5 Essential Tweaks